Not for the first time, the glut of incoming information threatens to push out useful knowledge into merely a cloud of data. And there’s no doubt that activity streams and linked data are two of the more interesting things to aid research in this onrushing surge of information. In this screen-mediated age, the advantages of deep focus and hyper attention are mixed up like never before, since the advantage accrues to the company who can collect the most data, aggregate it, and repurpose it to willing marketers.

N. Katherine Hayles does an excellent job of distinguishing between the uses of hyper and deep attention without privileging either. Her point is simple: Deep attention is superb for solving complex problems represented in a single medium, but it comes at the price of environment alertness and flexibility of response. Hyper attention excels at negotiating rapidly changing environments in which multiple foci compete for attention; its disadvantage is impatience with focusing for long periods on a noninteractive object such as a Victorian novel or complicated math problem.

Does data matter?

The MESUR project is one of the more interesting research projects going, now living on as a product from Ex Libris called bx. Under the hood, MESUR looks at the research patterns of searches, not simply the number of hits, and stores the information as triples, or subject-predicate-object information in RDF, the resource description framework. RDF triple stores can put the best of us to sleep, so one way of thinking about it is smart filters. Having semantic information available allows computers to distinguish between Apple the fruit and Apple the computer.

In use, semantic differentiation gives striking information gains. I picked up the novel Desperate Characters, by Paula Fox. While reading it, I remembered that I first heard it mentioned in an essay by Jonathan Franzen, who wrote the foreward to the edition I purchased. This essay was published in Harper’s, and the RDF framework in use on harpers.org gave me a way to see articles both by Franzen, as well articles that were about him. This semantic disambiguation is the obverse of the firehose of information that is monetized from advertisements.

Since MESUR is pulling information from CalTech and Los Alamos National Laboratory’s SFX link resolver service logs, there’s a immediate relevance filter applied, given the scientists who are doing research in those institutions. Using the information contained in the logs, it’s possible to see if a given IP address belonging to faculty or department) goes through an involved research process, or a short one. The researcher’s clickstream is captured, and fed back for better analysis. Any subsequent researcher who clicks on a similar SFX link has a recommender system seeded with ten billion clickstreams. This promises researchers a smarter Works Cited, so that they can see what’s relevant in their field prior to publication. Competition just got smarter.

Standards based way of description

Attention.xml, first proposed in 2004 as an open standard by Technorati technologist Tantek Celik and journalist Steve Gilmor, promised to give priority to items that users want to see. The problem, articulated five years ago, was that feed overload is real, and the need to see new items and what friends are also reading requires a standard that allows for collaborative reading and organizing.

The standard seems to have been absorbed into Technorati, but the concept lives on in the latest beta of Apple’s browser Safari, which lists Top Sites by usage and recent history, as does Firefox’s Speed Dial. And of course, Google Reader has Top Recommendations, which tries to leverage the enormous corpus of data it collects into useful information.

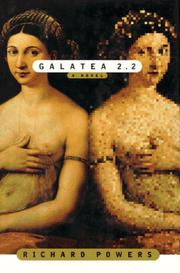

Richard Powers’ novel Galatea 2.2 describes an attempt to train a neural network to recognize the Great Books, but finds socializing online to be a failing project: “The web was a neighborhood more efficiently lonely than the one it replaced. Its solitude was bigger and faster. When relentless intelligence finally completed its program, when the terminal drop box brought the last barefoot, abused child on line and everyone could at last say anything to everyone else in existence, it seemed to me we’d still have nothing to say to each other and many more ways not to say it.” Machine learning has its limits, including whether the human chooses to pay attention to the machine in a hyper or deep way.

Hunch, a web application designed by Caterina Fake, known as co-founder of Flickr, is a new example of machine learning. The site offers to “help you make decisions and gets smarter the more you use it.” After signing up, you’re given a list of preferences to answer. Some are standard marketing questions, like how many people live in your household, but others are clever or winsome. The answers are used to construct a probability model, which is used when you answer “Today, I’m making a decision about…” As the application is a work in progress, it’s not yet a replacement for a clever reference librarian, even if its model is quite similar to the classic reference interview. It turns out that machines are best at giving advice about other machines, and if the list of results incorporates something larger than the open Web, then the technology could represent a leap forward. Already, it does a brilliant job at leveraging deep attention to the hypersprawling web of information.

How to Achieve True Greatness

Privacy has long returned to norms first seen in small-town America before World War II, and our sense of self is next up on the block. This is as old as the Renaissance described in Baldesar Castiglione’s The Book of the Courtier and as new as twitter, the new party line, which gives ambient awareness of people and events.

In this age of information overload, it seems like a non sequitur that technology could solve what it created. And yet, since the business model of the 21st century is based on data and widgets made of code, not things, there is plenty of incentive to fix the problem of attention. Remember, Google started as a way to assign importance based on who was linking to who.

This balance is probably best handled by libraries, with their obsessive attention to user privacy and reader needs, and librarians are the frontier between the machine and the person. The open question is, will the need to curate attention be overwhelming to those doing the filtering?